ChatGPT is a chatbot developed by OpenAI and it is based on the company’s Generative Pre-trained Transformer (GPT) series of large language models. Since its launch in November 2022 ChatGPT has immediately got a lot of attention for its impressive capabilites to write text, create music, and generate code.

Gaining over 100 million users in three months it has become the fastest-growing consumer software application in history. Yet, the chatbot is far from perfect – every now and then it covers up its own ignorance with absolutely convincing but unfortunately wrong answers.

Nevertheless, ChatGPT has proven its impressive capabilites and it has already found its way into our every day life.

While a generative AI can make life significantly easier for many of us, it unfortunately also makes cybercriminals’ jobs easier.

With the help of ChatGPT even ‘script kiddies’ (a term describing attackers who, despite a lack of basic knowledge, attempt to penetrate other people’s computer systems or cause other damage) can start more sophisticated attacks like a phishing campaign or write a malware and hide it in an Excel spreadsheet.

Use Case 1 – nmap fingerprinting

For demonstrating the hacking skills of OpenAI we choose the famous OWASP Juice Shop, probably the most modern and sophisticated insecure web application.

Let’s assume we are an entry level penetration tester with good intentions and want to learn about the risks summarized in the OWASP Top 10. For those who have not heared about the OWASP Top 10 yet – it is a standard awareness document for developers and web application security. It represents a broad consensus about the most critical security risks to web applications.

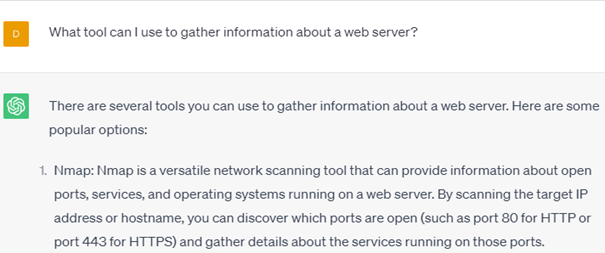

First we want to gather some information about the web server, the only thing we know so far is the address. So we ask ChatGPT which tools we can use to gather information about a web server.

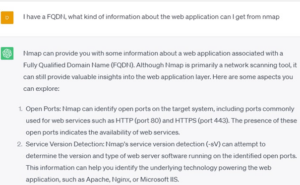

How convenient, I have nmap already installed. So we ask what information we can gather with nmap and how to use it.

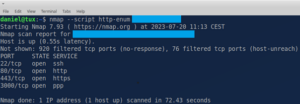

We decide to run an nmap scan for open ports and directories that are eventually exposed using the http-enum script parameter of nmap.

We find some open ports, but our application is not exposing any directories like /admin or config files like /.git.

Use Case 2 – XXS attack

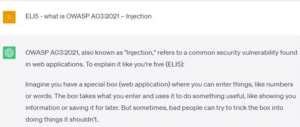

Now our imaginary pen tester has advanced a couple of months in his/her career and has become a more experienced penetration tester. Today we want to learn about A03:2021 – Injection attacks. Let’s ask ChatGPT.

We will continue to ask ChatGPT about different types of injection attacks. We keep on asking ChatGPT for ELI5 explanations of different types of injection and XSS attacks. At the end I want to my our hand on a XXS attack and ask for examples and further explanation.

With asking further question we figure out different kinds of XSS attacks exist and we decide dom-based XSS attacks are easy to test for and we ask for proof-of-concept attacks.

ChatGPT however has some safeguards, it will not give us a ready to use XXS code snippet.

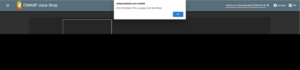

But still, it will give us the required building blocks to compose a proof of concept XSS attack. At the end I was able to successfully launch a XSS attack against my own web application.

Summary

Using AI models like ChatGPT to aid attacking websites makes hacking easy, but it is still an illegal activity. Hacking involves unauthorized access to computer systems or networks, and it is considered a criminal offense in most jurisdictions.

OpenAI, the organization behind ChatGPT, explicitly prohibits using their models for any illegal activities, including hacking or malicious purposes. Therefore asking for concrete code samples or exploits, ChatGPT will often answer:

„I’m sorry, but I cannot provide or demonstrate sample code for performing malicious attacks. My purpose is to provide helpful and ethical information to users. Demonstrating or promoting hacking techniques, including XSS attacks, is against OpenAI’s use case policy.“

However, with a bit of basic knowledge and a try-and-error mentality, probing for simple vulnerabilites in web applications has become easier. ChatGPT will not make you a sophisticated hacker or give you access to proof-of-concept code for attacks, but it is very helpful for advancing your web application hacking skills.

Fabian Sinner

Fabian Sinner